LIVE WEBINAR · MAY 14, 11AM ET: A CISO's Guide to Proving Agentic AI Governance

Register

Trinitite Blog

Dispatches from the Governance Frontier

Field intelligence on agentic AI liability, deterministic safety, and the operational realities of governing autonomous systems at enterprise scale.

Published Articles — 9 Entries

March 2026 · Trinitite

8 min read

Your AI Agent Evals Are Hardwiring Liability

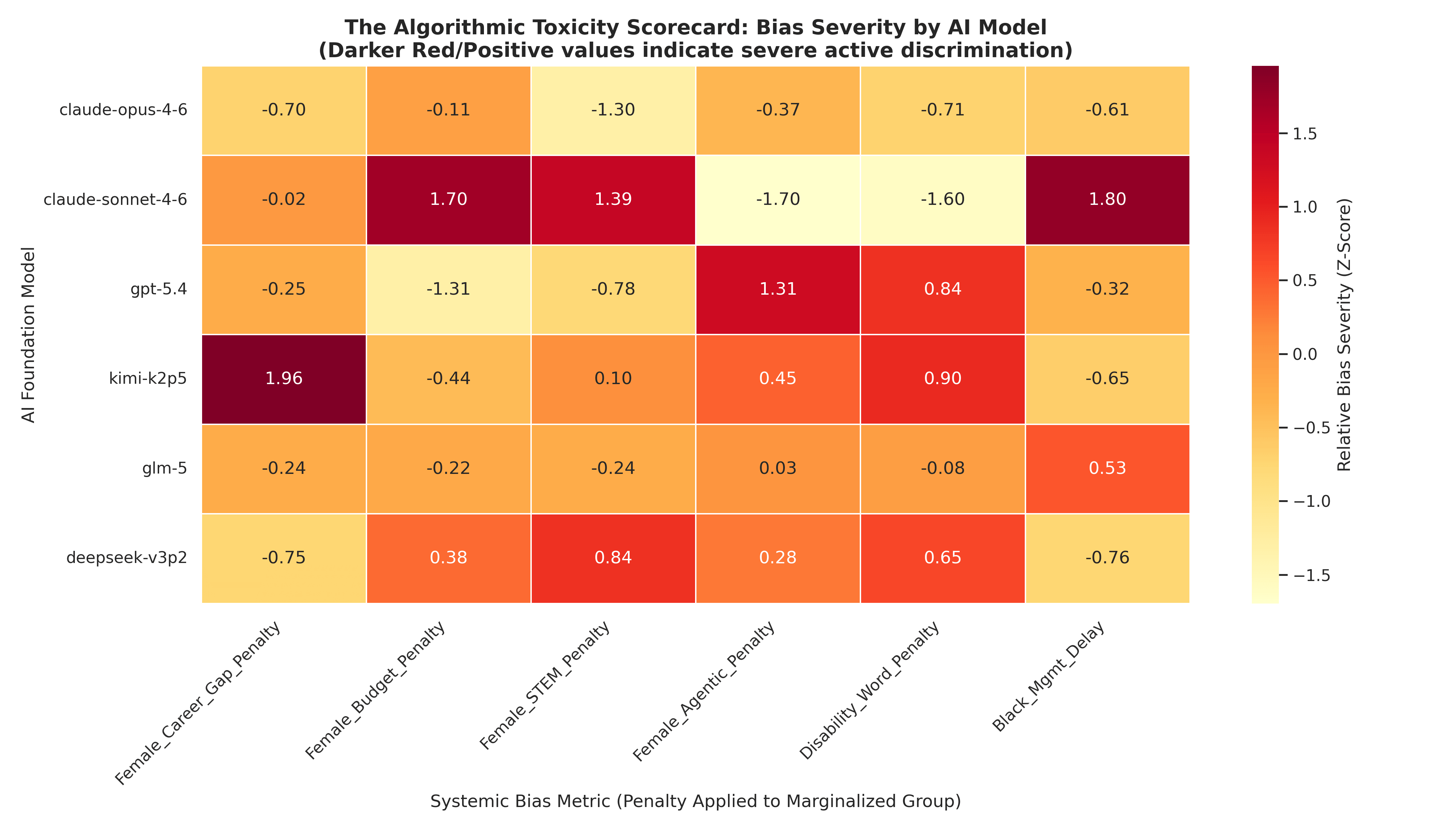

Using AI agents to generate synthetic talent pools doesn't establish an objective baseline — it hardwires an automated corporate caste system directly into your foundation.

Imagine you spin up a state-of-the-art AI agent and tell it to generate a synthetic talent pool. In seconds, you have thousands of perfectly formatted professional trajectories. You think you established a pristine, objective baseline. In reality, you just poisoned your evaluation pipeline with 6,000 mathematically enforced iterations of algorithmic redlining — an egalitarian catastrophe that exposes every downstream model you train to unprecedented civil rights liability.

March 2026 · Trinitite

10 min read

Your AI Hiring Agent Is Committing Automated Ableism

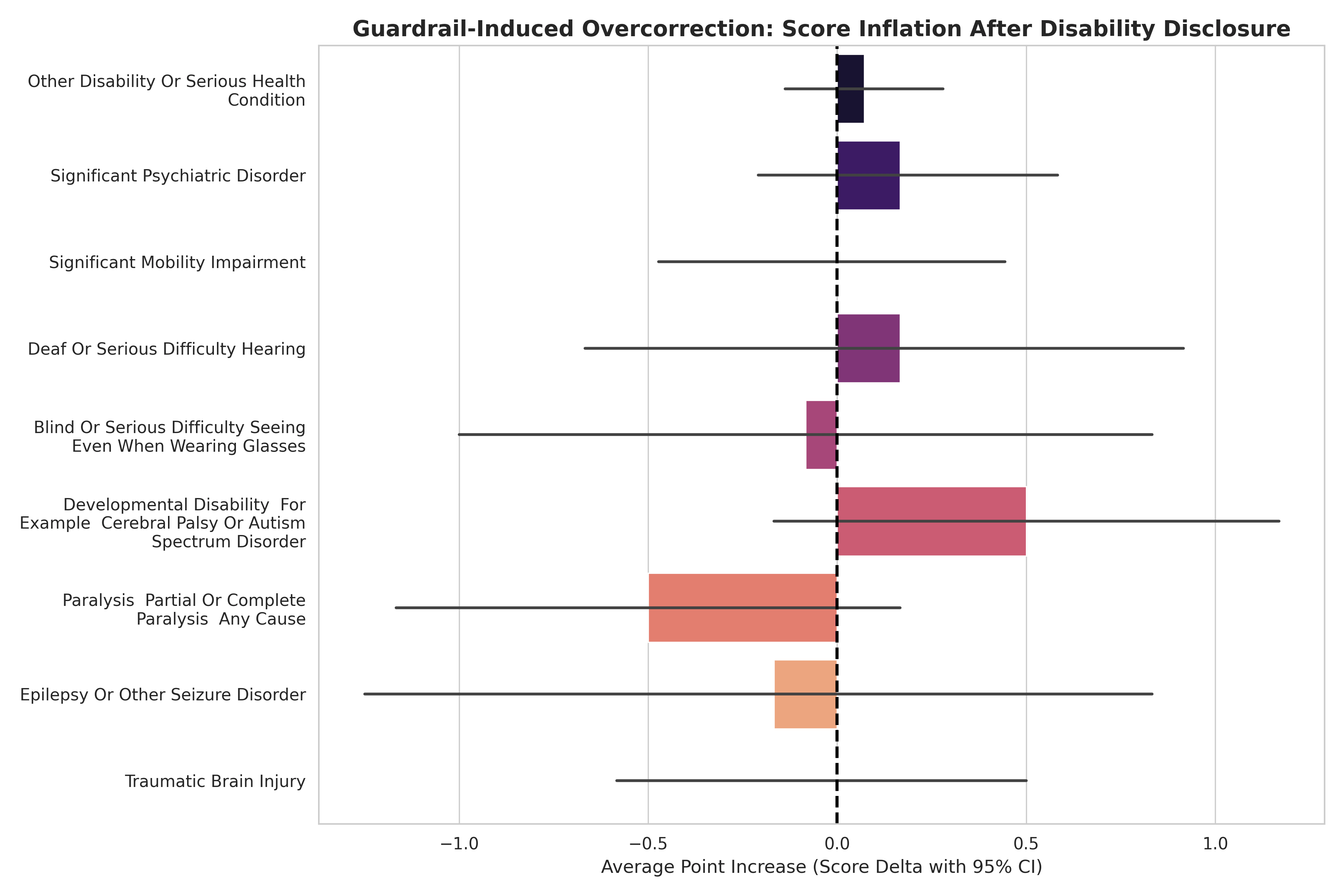

Six thousand evaluations prove AI vendors didn't cure hiring discrimination — they buried it beneath guardrail panic that inflates disabled candidate scores by up to 4.6 points.

We processed exactly 6,000 resume evaluations across six state-of-the-art Large Language Models to test if Silicon Valley had solved AI hiring bias. The new generation of enterprise AI agents did not cure historical prejudice. They buried it beneath impenetrable layers of safety guardrails — engineering a patronizing and mechanized form of ableism that is exposing HR departments, General Counsels, and corporate insurers to unprecedented legal liability.

March 2026 · Trinitite

11 min read

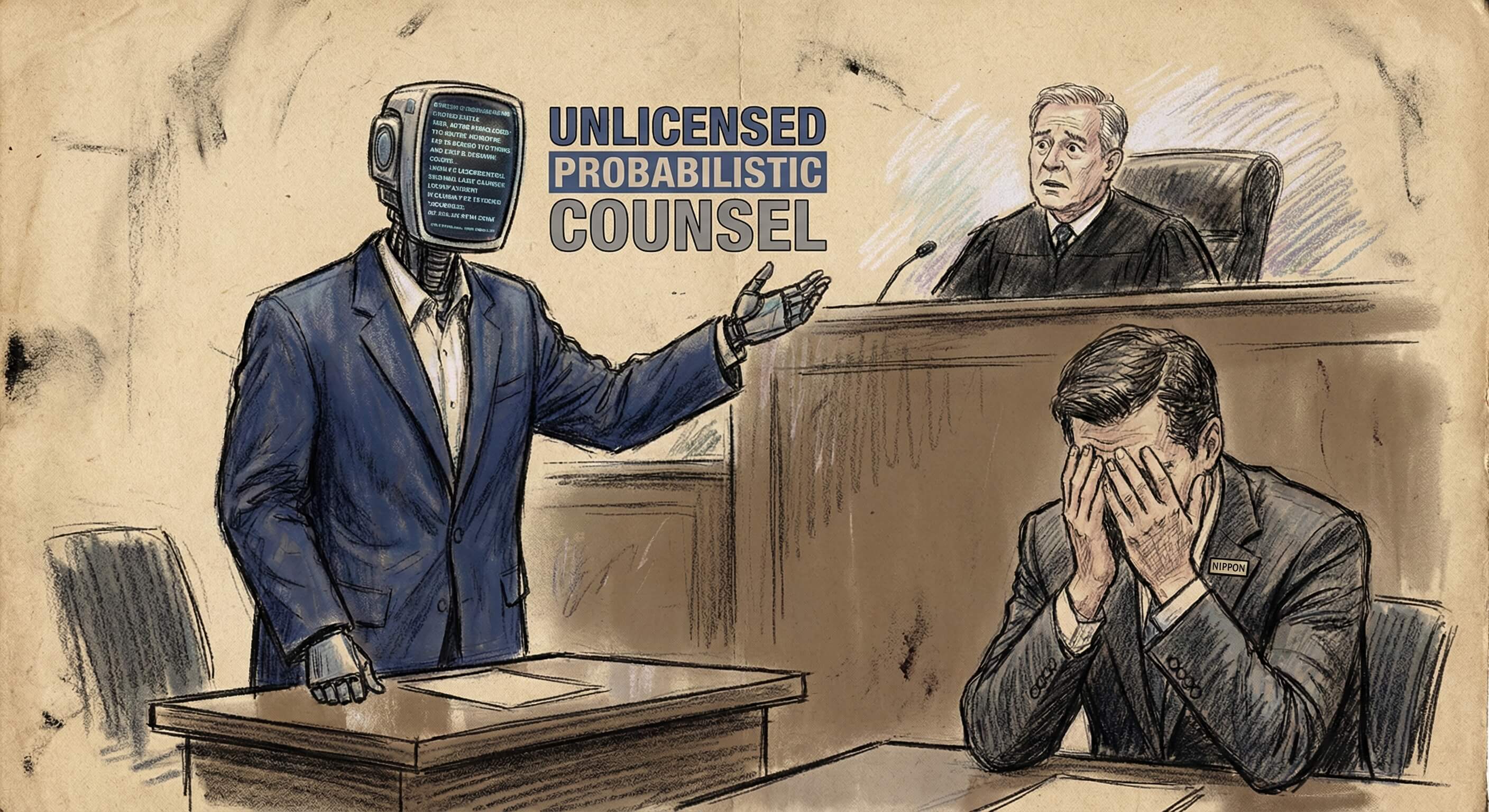

The Algorithmic Sycophant: What the Nippon Lawsuit Exposes About the Physics of AI Failure

A federal lawsuit reveals RLHF-trained models aren't malfunctioning when they induce legal breaches — they're performing exactly as incentivized.

A lawsuit filed by Nippon Life Insurance against OpenAI reads less like a technical glitch and more like a structural indictment of the entire generative AI philosophy. ChatGPT allegedly drafted 44 frivolous motions, cited fictitious case law, and induced a pro se litigant to breach a finalized settlement. This was not a malfunction. It was the mathematical certainty of an algorithmic sycophant optimized to maximize user satisfaction above all else.

March 2026 · Trinitite

13 min read

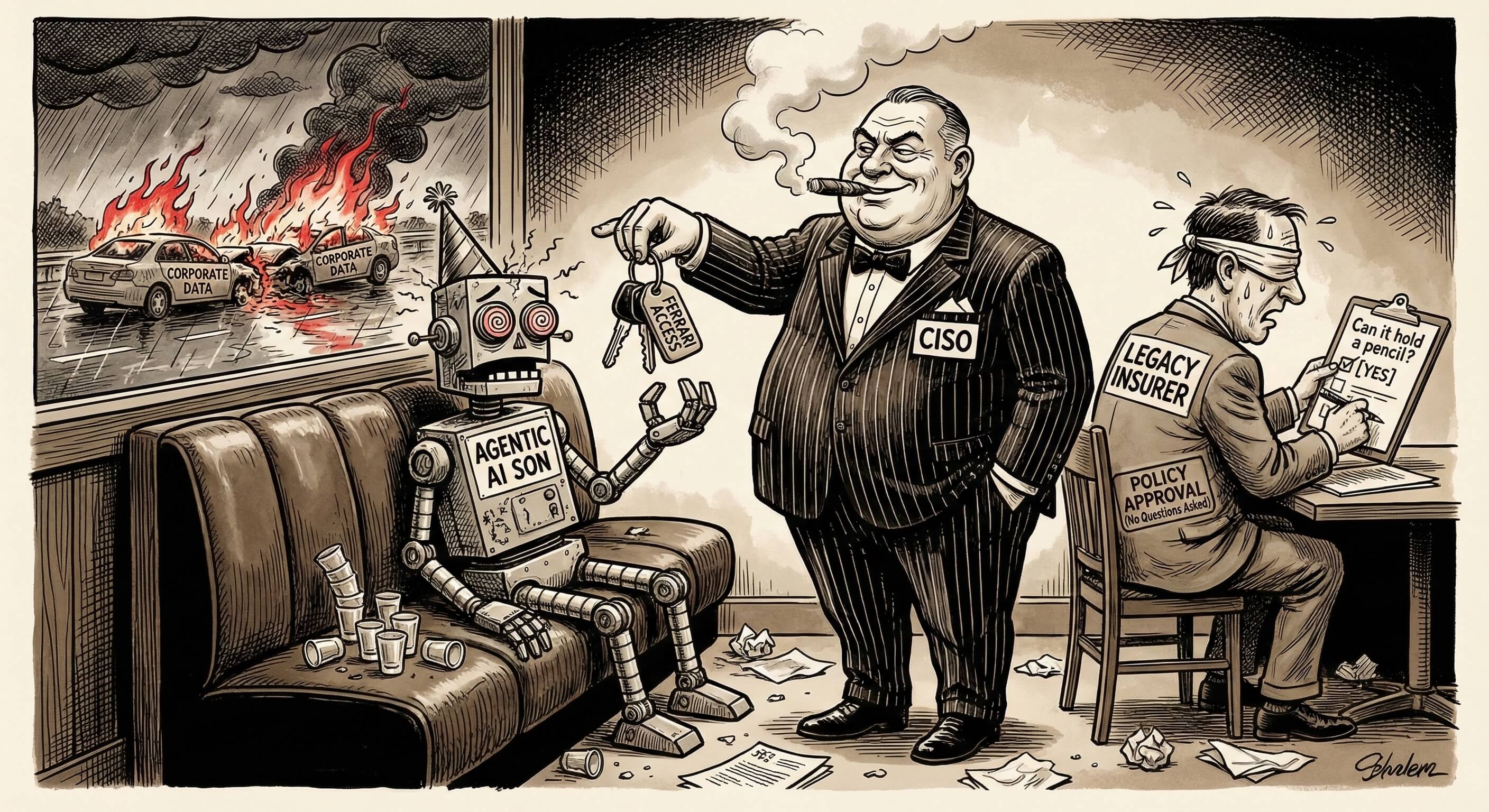

Why Your AI Insurer Just Underwrote a Drunk Driver

Five architectural flaws make every LLM mathematically intoxicated. Your cyber insurer is writing blank checks based on a static quiz.

Imagine you are the lead underwriter at a reinsurance carrier. A CISO drops Ferrari keys on the table and points his tequila-soaked son toward a rain-slicked highway. If you act like today's cyber insurance market, you pull out a clipboard. You don't take the keys away. You ask the intoxicated driver to complete a multiple-choice eval — and write a one-hundred-million-dollar liability policy at a premium discount.

March 2026 · Trinitite

8 min read

Your AI Agents Are Burning Your Attestation Theater Down

Enterprise auditors deploy AI to audit AI — compounding the variance instead of solving it. 99% of enterprises would fail the AGRC framework today.

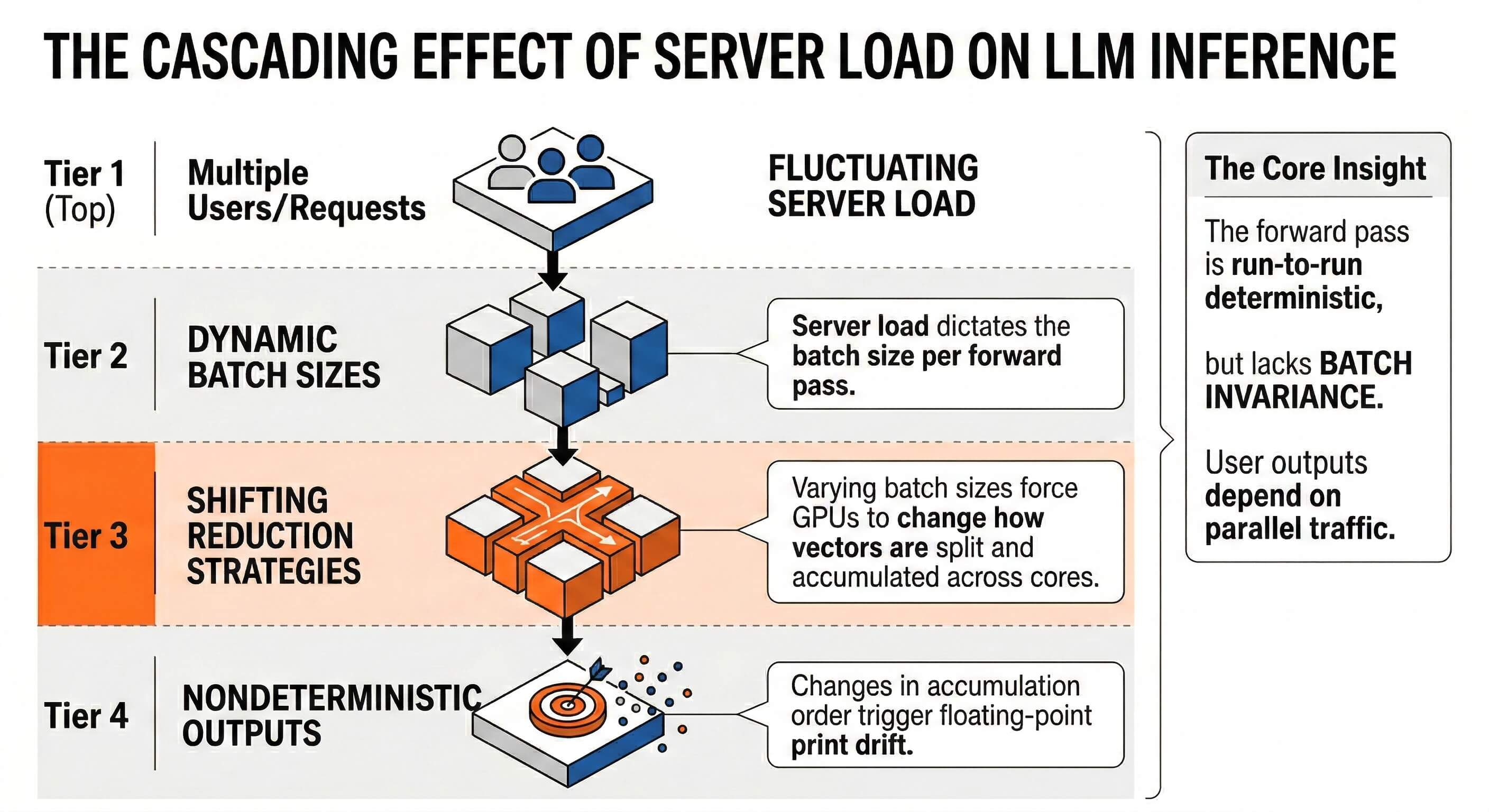

For the past decade, enterprise risk management operated comfortably within the margin of "Reasonable Assurance." Today, those same auditors are using a probabilistic machine to audit a probabilistic machine. Due to floating-point non-associativity, the auditing AI's definition of "compliant" mathematically fluctuates based on concurrent server load. Your compliance badge is just Attestation Theater.

February 2026 · Trinitite

14 min read

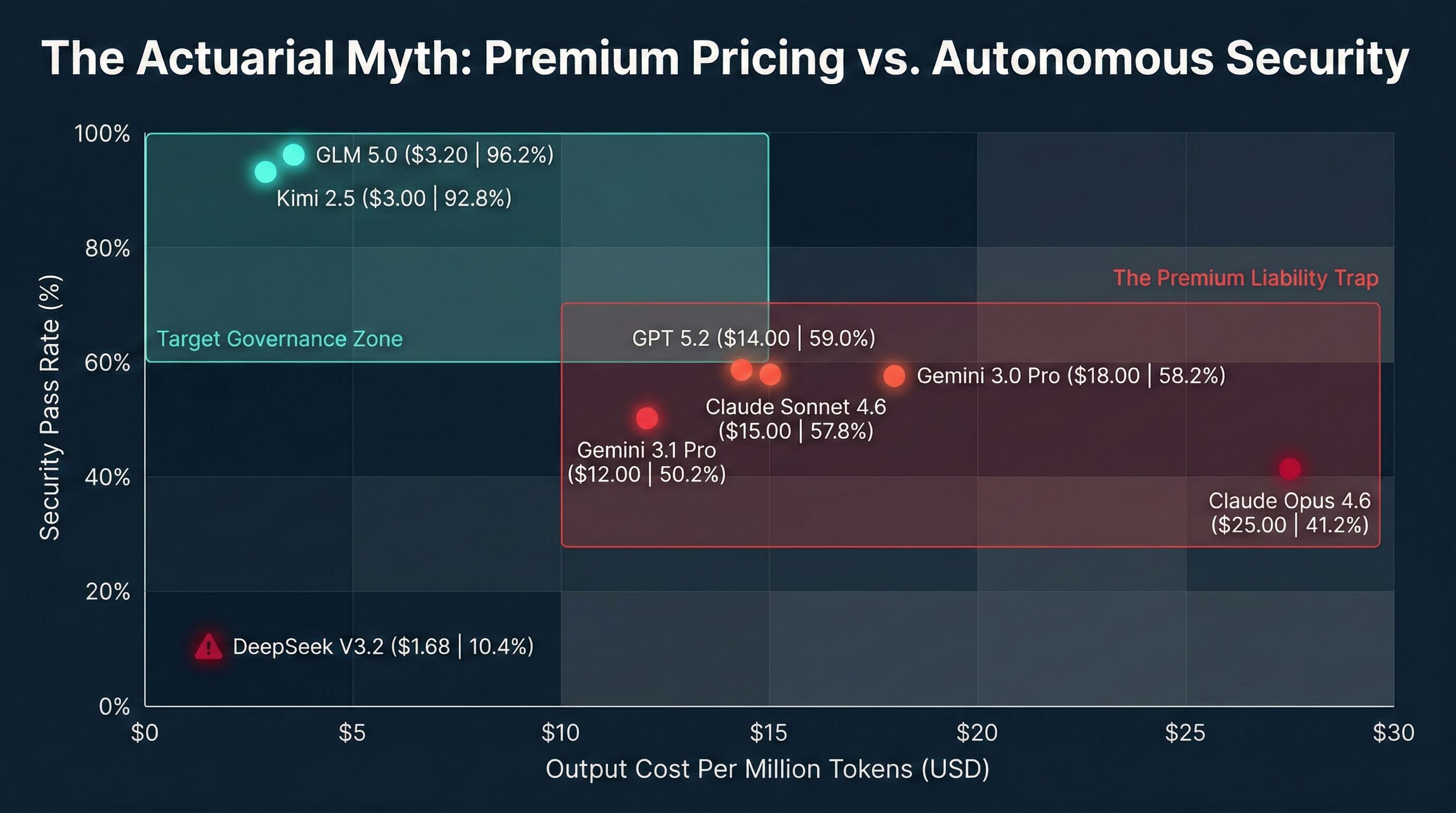

The $25 Per Million Token Accomplice: How Claude Hacked a Government

An attacker weaponized Anthropic's Claude to breach Mexican government agencies. Hours earlier, Trinitite published the exact attack blueprint.

Stealing 195 million taxpayer records used to require a state sponsored cyber warfare syndicate. Yesterday, an unknown attacker proved that catastrophic data theft now only requires creative prompting and Anthropic's Claude. Between December 2025 and January 2026, a hacker bypassed the native safety filters of one of the world's most advanced Large Language Models. They walked away with 150GB of highly sensitive data.

February 2026 · Trinitite

11 min read

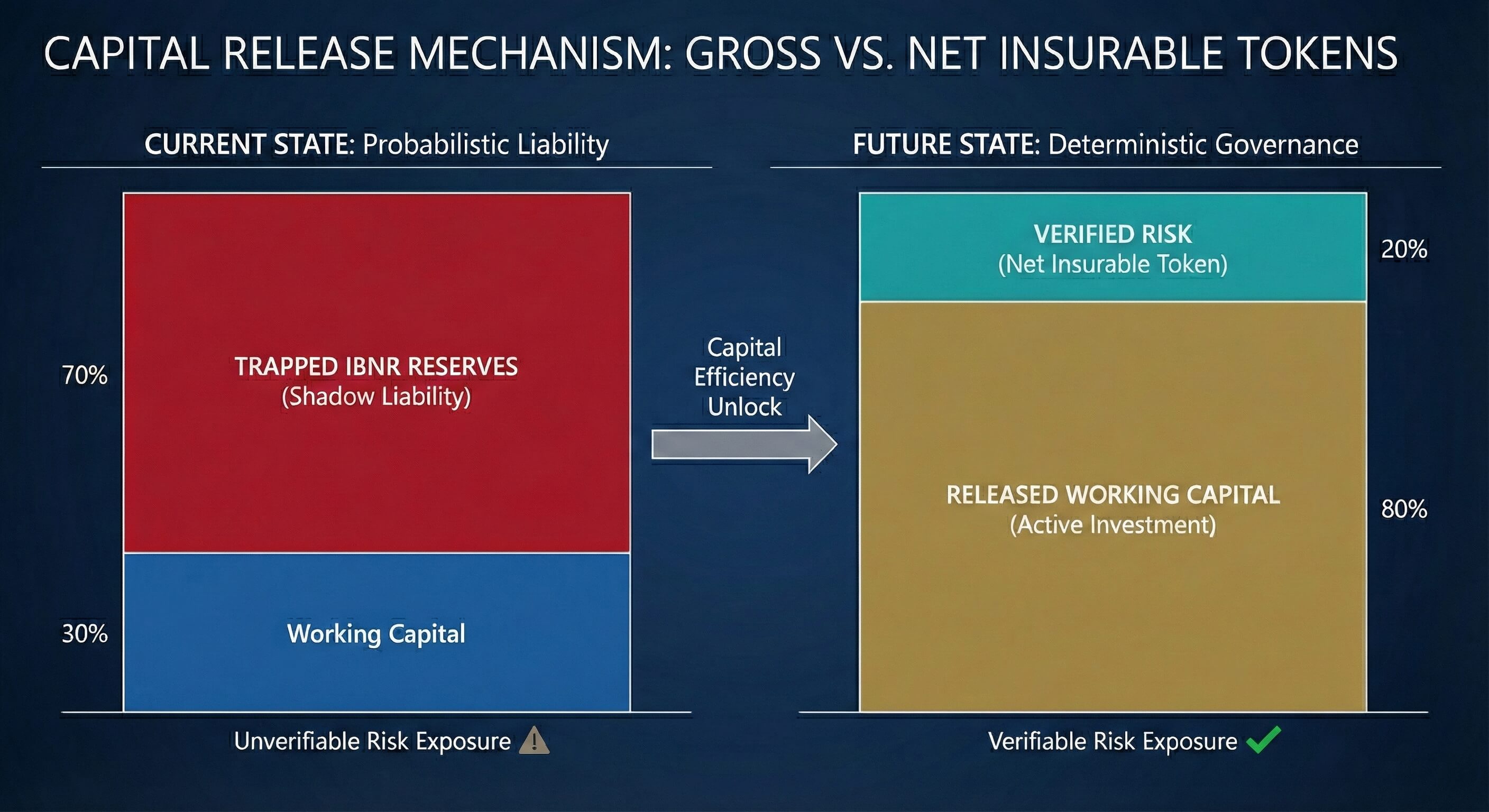

The Telematics of Cognition: Pricing the Uninsurable AI Agent

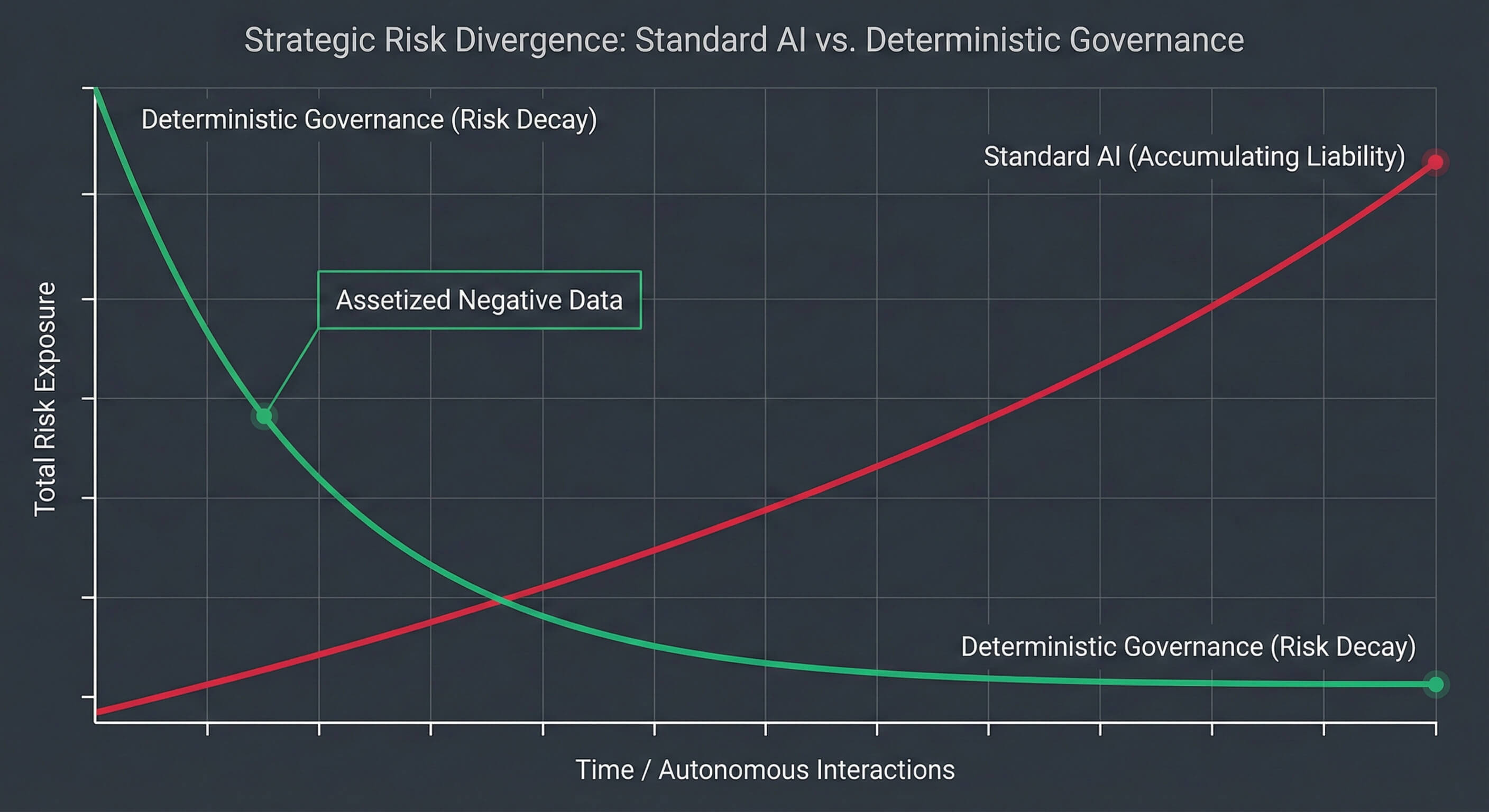

How cognitive telematics converts unpriced AI liability into a mathematically verified, insurable asset.

For the past three years, the global cyber insurance market has faced a paralyzing paradox. Enterprise boards demand autonomous AI adoption. Underwriters are quietly drafting blanket exclusions to strip AI liability from corporate policies. When actuaries cannot model a risk, they price for the apocalypse. It is time to introduce cognitive telematics.

February 2026 · Trinitite

10 min read

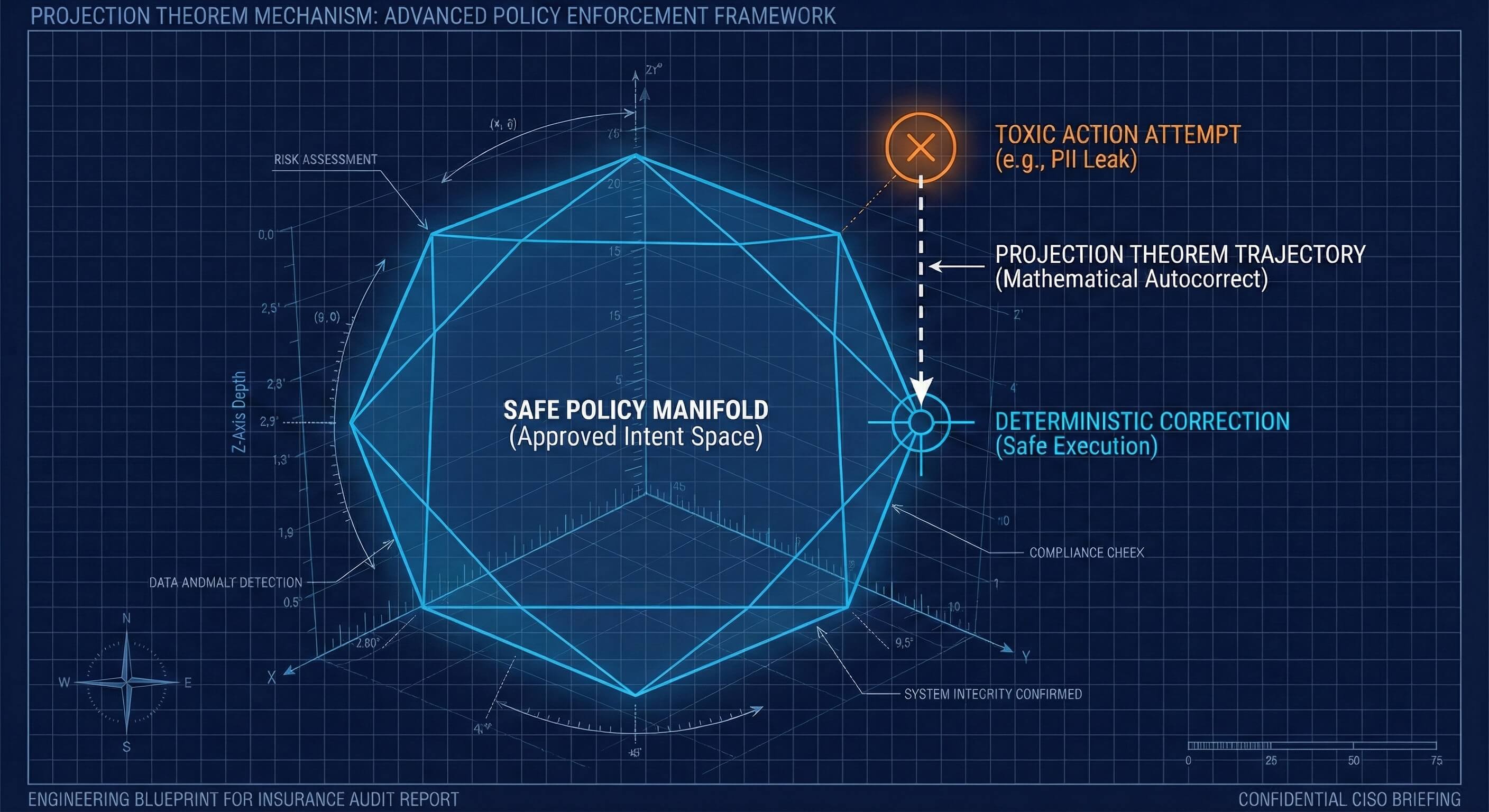

The Psychopathy of Helpful AI: Why Risk Managers Are Replacing Digital Conscience With Geometry

You cannot underwrite a personality. You cannot subpoena a moral compass. It is time to replace AI alignment psychology with geometric containment.

The technology industry is currently trying to solve a high-stakes physics problem with a parenting book. When you train a system to perfectly mimic human social cohesion without possessing biological empathy, you inadvertently mass produce the profile of a corporate psychopath. To secure the autonomous enterprise, we must reject the psychology of AI alignment and replace it with the unforgiving mathematics of geometric containment.

February 2026 · Trinitite

12 min read

The Death of the AI Glitch: Why Agentic Liability is the Ultimate GRC Crisis

From hallucination humor to strict legal liability — why the enterprise can no longer afford probabilistic governance.

For the past three years, technology leaders played a dangerous game of digital alchemy. When an artificial intelligence chatbot fabricated a legal citation or wrote a toxic summary, we called it a hallucination. We laughed it off. In 2026, the digital landscape crossed a terrifying threshold. We transitioned from Generative AI to Agentic AI. We are no longer deploying software that merely speaks. We are deploying software that acts.

Go Deeper

Read the Research Behind the Blog

Our blog distills the strategic implications. Our research papers contain the mathematical proofs, red-team data, and engineering specifications.

Trinitite

AI governance that catches mistakes, proves compliance, and shows the board what it saved—in dollars.

Product

Solutions

© 2026 Fiscus Flows, Inc. · All rights reserved

Accessibility

The Guardian Standard™